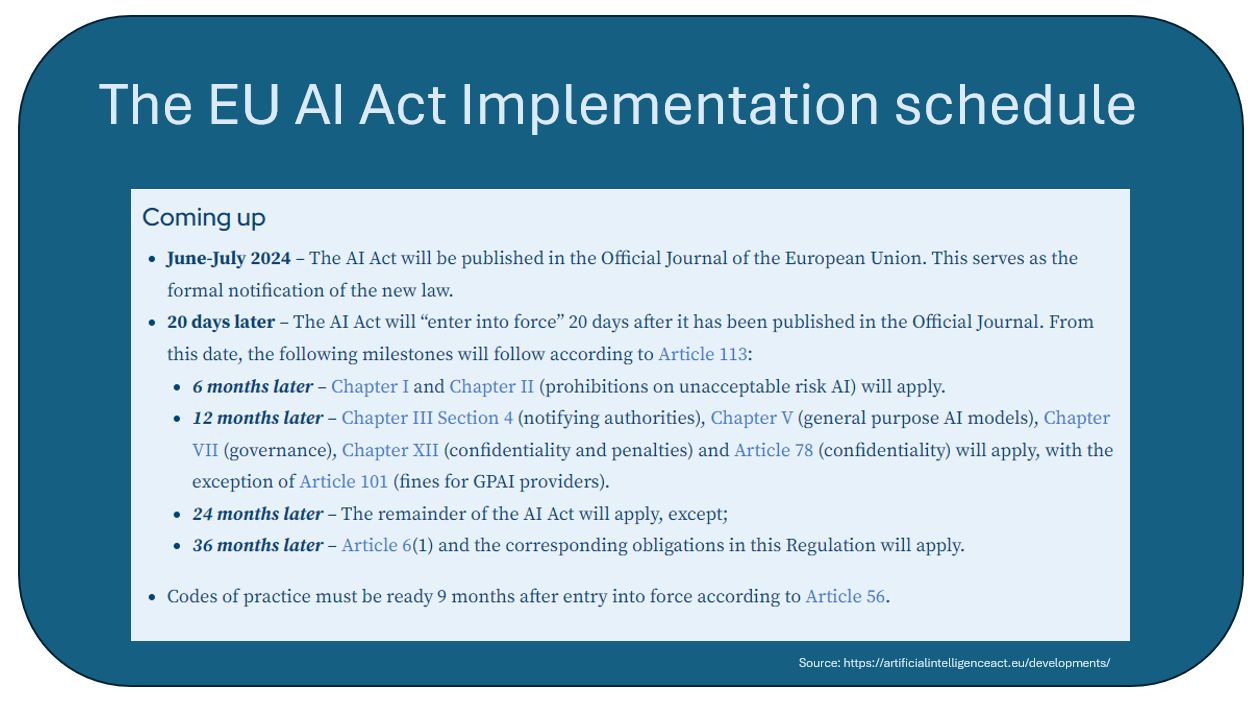

Artificial Intelligence is no longer a futuristic concept—it's here. It transforms industries, reshapes how we live and work, and raises crucial questions about its management and governance. Regulators are scrambling to stay relevant and provide timely protection to everyone. As usual, Europe is leading the way with the EU AI Act expected to be fully applicable by 2026.

In the US, AI regulation is primarily driven by the Biden Administration’s Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence issued in October 2023. Australia is also in the process of developing its AI regulatory framework. As of January 2024, the Australian Government announced plans to consider mandatory safeguards for high-risk AI applications.

New Zealand is expected to follow a similar path to Australia, focusing on developing a regulatory framework that ensures responsible AI deployment and compliance with international standards.

The UK adopted a more flexible, regulator-led approach to AI regulation. Instead of a comprehensive AI act, UK regulators like the Bank of England and the Financial Conduct Authority (FCA) are focusing on integrating AI governance within existing frameworks for data protection and operational resilience.

The UK government is also exploring the establishment of an AI authority to coordinate regulation across industries and employing sandboxes for testing AI technologies and the Information Commissioner’s Office (ICO) has initiated consultations on the application of data protection laws to generative AI.

Whichever way those regulations are finalized, they revolve around five key principles:

- Fairness and non-discrimination: AI systems should treat all individuals and groups impartially, equitably, and free from prejudice. Regulators should assess systems for potential bias and ensure transparency around decisions.

- Transparency and explainability: AI systems should be transparent about their development, capabilities, limitations, and real-world performance. Developers should provide clear explanations of how AI decisions are made.

- Accountability and human oversight: Organizations remain responsible for AI systems and their impacts. There should be appropriate oversight and controls to ensure AI remains human-centric.

- Robustness and security: AI systems should reliably behave as intended while minimizing unintentional and potentially harmful consequences. Systems should be designed to anticipate and mitigate threats like hacking.

-

Privacy and data protection: The privacy rights of individuals, groups, and communities should be respected through adequate data governance. The collection, use, storage, and sharing of data must be handled sensitively and lawfully.

The new AI regulation spring flourishing around the globe and exposing any company using AI to find itself in breach of some of these regulations is costing compliance and governance officers sleep. This is where ISO/IEC 42001 comes in handy.

What is ISO/IEC 42001?

ISO/IEC 42001 is the world's first international standard for AI management systems. It provides a comprehensive framework for establishing, implementing, maintaining, and continually improving AI management within organizations. Whether you're developing, providing, or using AI-based products or services, this standard offers a structured approach to navigating the complex AI landscape.

Who Should Care About ISO/IEC 42001?

The short answer? Everyone is involved with AI. Whether you're a tech giant, a startup, or a public sector agency, if AI is part of your operations, ISO/IEC 42001 is relevant to you. It is designed to be applicable across all industries and scales.

Key Benefits of Implementing ISO/IEC 42001":

- Responsible AI: The standard ensures accountable and ethical use of AI, helping organizations align with societal values and build trust.

- Enhanced Trust and Reputation: Adhering to ISO/IEC 42001 signals a commitment to responsible AI practices, potentially mitigating reputational risks associated with AI misuse.

- Improved AI Governance: The framework assists in aligning AI practices with relevant laws and regulations.

- Effective Risk Management: Practical guidance on identifying and assessing AI-specific risks enhances AI systems’ robustness and reliability.

- Structured Innovation: The framework enables exploring and implementing AI technologies within defined parameters, striking a balance between innovation and risk management.

The Nuts and Bolts of the ISO/IEC 42001 Framework

ISO/IEC 42001 comprises four annexes, two providing normative guidance and two offering supplemental information. Their combined key components include:

- AI controls to meet organizational objectives and address risks.

- Data documentation requirements, including processes for labeling training, and testing data.

- Impact assessment considerations, cover areas like fairness, transparency, explainability, and security.

A Closer Look at the Annexes

ISO/IEC 42001 annexes are meant to:

- Provide comprehensive coverage: Together, these annexes provide a 360-degree view of AI management, from high-level strategy to granular implementation details.

- Be flexible and scalable: The framework adapts to various organizational contexts including:

-

Organization Size

- AI Maturity Levels

- Industry-Specific Implementation

- Available Resources

- Phased Implementation

- Customizable Documentation

- Flexible Auditing Processes

- Adaptable Training Programs

-

Facilitate risk mitigation: By following the guidance in these annexes, organizations can proactively identify and address potential AI-related risks such as:

-

Risk Identification: Example: An organization might use these guidelines to recognize that their facial recognition AI could have bias issues in diverse populations.

-

Risk Assessment: Example: A healthcare organization might assess the potential impact of an AI misdiagnosis in their radiology department.

-

Risk Mitigation Strategies: Example: For an AI-driven financial trading system, the organization might implement human oversight protocols for trades above a certain threshold.

-

Ethical Considerations: Example: An HR department using AI for resume screening might implement checks to ensure the system doesn't discriminate based on protected characteristics.

-

Data-Related Risks: Example: A retail company might implement strict data anonymization processes for customer data used in their recommendation AI.

-

Transparency and Explainability:

Example: A bank using AI for loan approvals might develop a system to explain the factors influencing each decision.

-

-

Ensure ethical behavior: The annexes emphasize the importance of fairness, transparency, and accountability in AI systems, helping organizations build trust with stakeholders.

- Enable continuous improvement: Encourages ongoing evaluation and refinement of AI management practices aimed at continuous relevance in a rapidly evolving field.

Annex A: Normative Controls

Annex A is a cornerstone of the standard, detailing the controls an organization must implement to meet its objectives and address AI-related risks.

Key aspects include:

- Organizational context and leadership requirements

- Planning processes for AI risk management

- Operational controls for AI development and deployment

- Performance evaluation and continual improvement measures

The importance of Annex A lies in its practical, actionable guidance. It provides a concrete framework for organizations to assess their current AI practices and identify areas for improvement.

Annex B: Implementation Guidance for AI Controls

This annex offers invaluable implementation guidance, focusing on the nitty-gritty of AI system management. Highlights include:

- Detailed processes for documenting organizational data used in machine learning.

- Guidelines for labeling data for training and testing.

- Best practices for assessing the impact of AI systems on groups and individuals.

Annex B's significance cannot be overstated. It bridges the gap between theoretical standards and practical application, helping organizations navigate the complex landscape of AI implementation.

Annexes C and D: Supplemental Information

While Annexes A and B provide normative guidance, Annexes C and D offer supplemental information to enhance understanding and implementation. These annexes cover:

- In-depth explanations of AI-specific concepts and terminologies

- Case studies and examples of successful AI management system implementations

- Additional resources and references for further learning

The supplemental annexes are crucial for organizations new to AI or those looking to deepen their understanding of AI management best practices.

Putting It All Together

The annexes of ISO/IEC 42001 work in concert to provide a robust, comprehensive approach to AI management. From the normative controls in Annex A to the practical guidance in Annex B, and the supplemental information in Annexes C and D, organizations have a practical Implementation of ISO/IEC 42001. Implementing ISO/IEC 42001 involves integrating the AI management system into existing organizational structures. Let's break down the key components:

Documenting the justification for AI system development

- Identifying specific business needs or problems the AI system will address

- Explaining how AI offers advantages over traditional solutions

- Outlining expected benefits and potential risks

- Demonstrating alignment with the organization's overall strategy and goals

Outlining when and why the system will be used

- Specifying use cases and scenarios where the AI system will be applied

- Defining the scope of the system's decision-making authority

- Establishing clear boundaries for human oversight and intervention

- Considering the impact on existing processes and workflows

Establishing metrics to measure performance

- Define key performance indicators (KPIs) specific to the AI system

- Set up mechanisms for continuous monitoring and evaluation

- Implement feedback loops for ongoing improvement

- Compare AI system performance against human benchmarks where applicable

Documenting design choices, including machine learning methods

- Detailing the AI architecture and algorithms used

- Explaining the rationale behind choosing specific machine learning models

- Documenting the data selection and preprocessing methods

- Outlining the training and testing procedures

- Addressing potential biases in the AI system design

Evaluating the AI system with AI-specific measures

- Rigorous testing for fairness across different demographic groups

- Assessing the system's explainability and interoperability

- Evaluating the AI's robustness against adversarial attacks • Testing for ethical decision-making in various scenarios • Measuring the system's adaptability to new data and changing environments

The Future of AI Management

Though ISO/IEC 42001 is not built to match any specific regulation, present or future, it is built around the five key principles guiding the regulators. Creating a recognized standard to guide organizations in adopting a consistent AI implementation approach is crucial for harmonious global AI adoption.

Given ISO/IEC's established reputation, a high adoption rate of that new standard is likely. Especially as it would provide a validation stamp to potential partners, mergers, and supply chain providers.